According to the CSA/Aembit Identity and Access Gaps in the Age of Autonomous AI report, only 15% of organizations are not using AI agents in production, while the majority are deploying them across workflows, infrastructure, and security operations.

The Cloud Security Alliance survey report, commissioned by Aembit, examines how enterprises are actually managing AI agent identity and access today. The report examines how organizations are managing AI agent identities and permissions—and whether existing identity and access management (IAM) models are keeping pace with their expanding operational role.

AI agents are rapidly becoming embedded across enterprise technology environments, interacting with applications, infrastructure, data platforms, and development pipelines. As these systems assume more autonomous operational roles, they introduce new challenges for identity management, access control, and governance.

The six key findings from the report include:

1. AI Agents Are Already Operating Across Enterprise Systems

2. Most AI Agents Do Not Operate as Distinct Identities

3. No Single Team Clearly Owns AI Agent Identity and Access

4. Confidence in AI Agent Access Exceeds Control Maturity

5. AI Agents Often Inherit Access and Expand the Attack Surface

6. Governance Mechanisms Are Compensating for Missing Identity Controls

Key Takeaway

AI agents are already operating across enterprise environments, yet identity and access practices have not fully adapted to manage them. Many organizations rely on inherited permissions, fragmented ownership, and governance safeguards rather than identity-centric controls. As AI agents gain autonomy and operational scope, extending core IAM principles—clear identity separation, least privilege access, and continuous visibility—will be critical to managing risk and enabling secure adoption.

1. AI Agents Are Already Operating Across Enterprise Systems

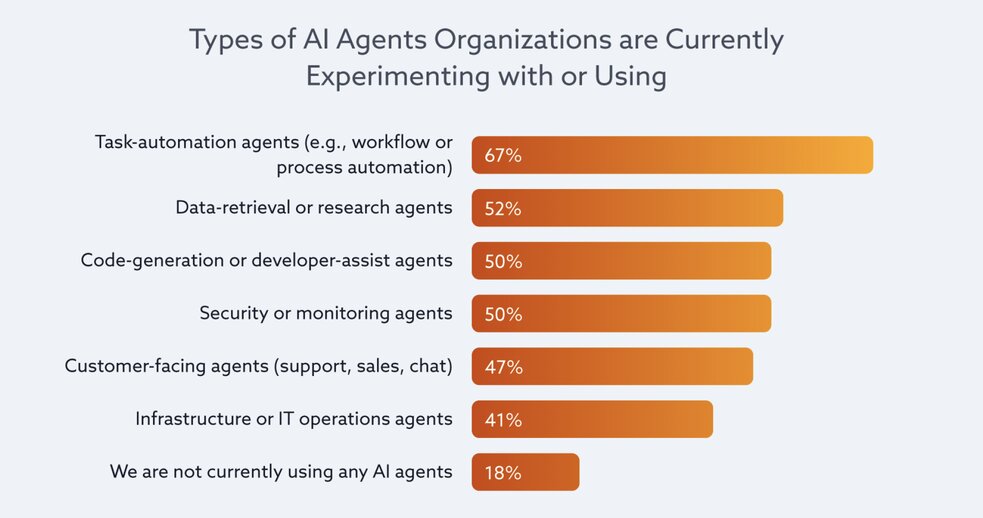

Agentic AI is already embedded in enterprise environments and expanding across critical workflows. Task-automation agents are reported by 67% of organizations, making automation the most common entry point. Adoption, however, extends well beyond efficiency use cases. More than half report data-retrieval or research AI agents (52%), and half report both code-generation or developer-assist AI agents (50%) and security or monitoring AI agents (50%). Infrastructure or IT operations AI agents are in use by 41%, while only 18% indicate no use of AI agents at all.

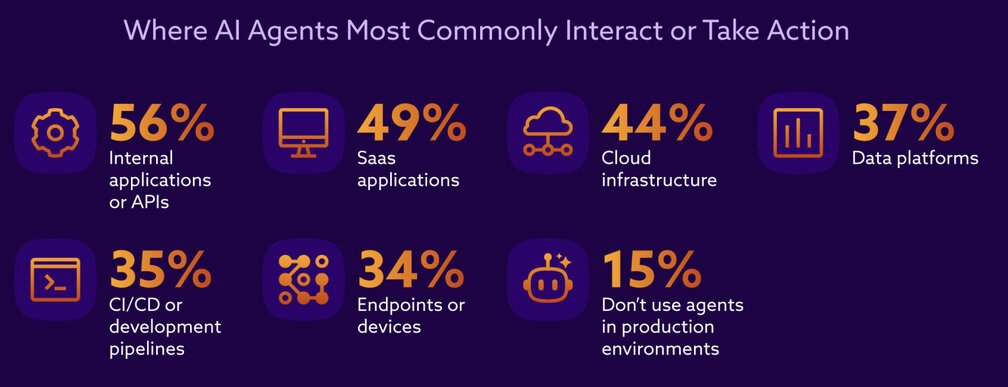

AI agent activity is occurring in environments where identity scoping and access control have operational consequences.

More than one-third indicate interaction with data platforms (37%), CI/CD or development pipelines (35%), and endpoints or devices (34%). Only 15% report that AI agents are not used in production environments, suggesting that most deployments extend beyond isolated test settings. As AI agents operate across internal systems, external platforms, and infrastructure layers, the number of integration points and access pathways expands. This cross-environment interaction increases the complexity of maintaining consistent identity governance and permission boundaries.

2. Most AI Agents Do Not Operate as Distinct Identities – They Borrow One

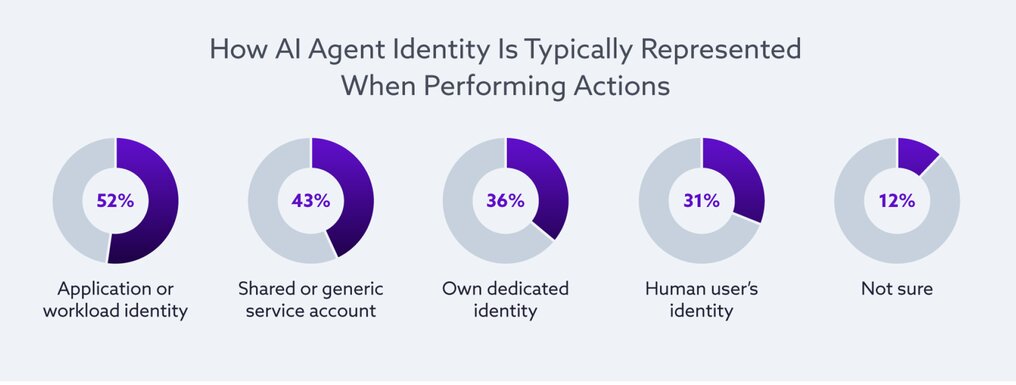

AI agents do not fit cleanly into existing human or traditional machine identity categories, and current security models reflect that ambiguity. It appears they aren’t treated as human users nor first-class machine identities with any consistency. No single model dominates, and multiple approaches often coexist within the same environment. Rather than reflecting a clear taxonomy, this distribution suggests a patchwork of identity treatments applied to AI agents.

The way an AI agent is represented directly shapes how permissions are granted and enforced. When AI agents operate under human identities or shared service accounts, they inherit the full set of permissions attached to those accounts, whether or not those permissions align with the AI agent’s intended role. Over time, this inheritance can unintentionally expand access scope and introduce policy drift, particularly when the underlying accounts were originally provisioned for interactive users or broader operational responsibilities.

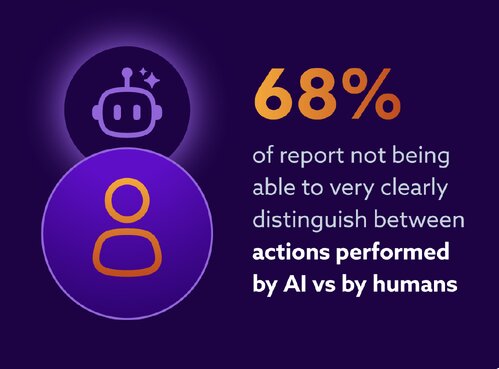

Sixty-eight percent of organizations report not being able to very clearly distinguish between actions performed by AI agents and those performed by humans. In contrast, only 32% are able to very clearly distinguish between actions performed by AI agents and humans.

Strong, unambiguous attribution is therefore not universal. In environments where AI agents operate under human or shared identities, separating AI agent-initiated activity from human activity becomes more challenging, introducing friction for monitoring, forensic analysis, and accountability.

3. No Single Team Clearly Owns AI Agent Identity and Access

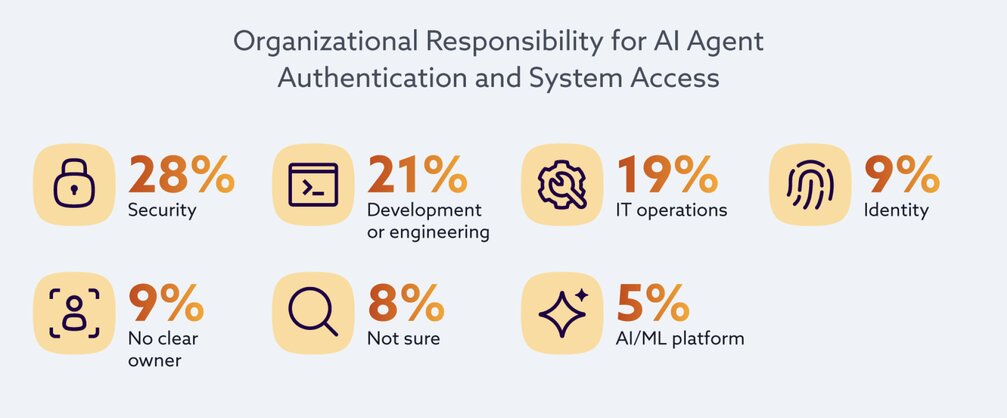

Ambiguity extends beyond taxonomy into organizational accountability. Responsibility for determining how AI agents authenticate and access systems is dispersed across multiple functions: security is identified as the primary owner by 28%, development or engineering by 21%, and IT by 19%, while only 9% point to identity or IAM teams as primarily responsible and another 9% report no clear owner. No single department emerges as the center of control.

When governance spans multiple domains without clear central accountability, consistent implementation and control maturity can be difficult to achieve. In practice, this means that how AI agents are provisioned and managed may differ from one team or environment to another. When no single function clearly owns agent identity and access, teams may rely on existing human or shared accounts, and controls may not be applied uniformly.

Over time, this can lead to inherited permissions, uneven enforcement, and slower coordination when an agent’s behavior needs to be reviewed or corrected.

Without clear centralized accountability for AI agent identity and access, implementation practices may evolve unevenly. This could result in differences with credential hygiene, enforcement, revocation, and other controls being implemented.

4. Confidence in AI Agent Access Exceeds Control Maturity

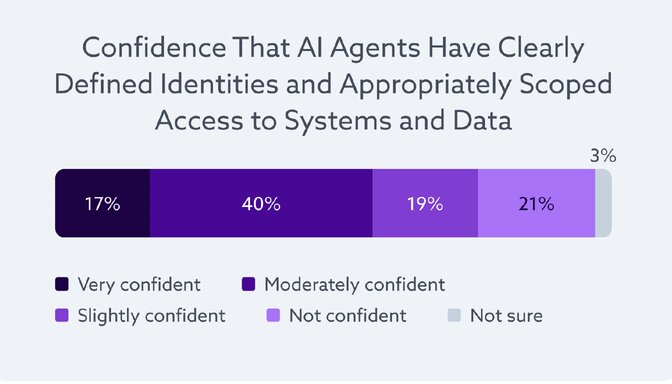

Organizations report moderate confidence in how AI agents are identified and controlled, yet underlying control practices reveal uneven visibility, operational friction, and distributed accountability. When asked how confident they are that AI agents have clearly defined identities and appropriately scoped access to systems and data, 40% report being moderately confident, and only 17% very confident. 19% indicate slight confidence, 21% report not being confident, and 3% are unsure.

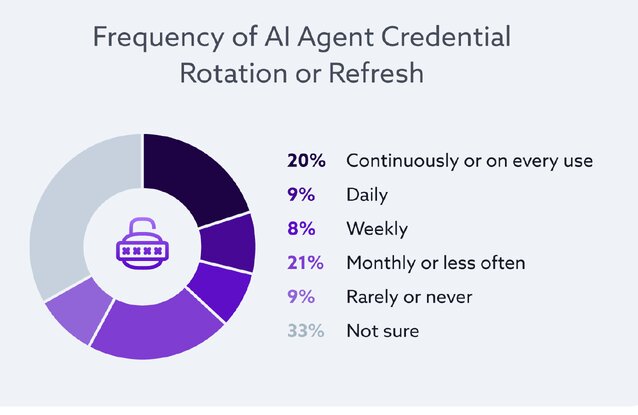

A full third of respondents lacking visibility into rotation cadence suggests that credential hygiene is not consistently tracked or owned. Where rotation practices are unclear, assurances around access durability and secret management may rely more on assumption than on verifiable process.

Organizations often feel reasonably confident in managing AI agent access, yet the underlying operational mechanics reveal uneven implementation and limited transparency. This gap between perceived readiness and demonstrable control maturity reflects the broader challenge of adapting non-human identity practices to rapidly expanding deployments of AI agents. These underlying control dynamics shape how access risk ultimately materializes in practice

5. AI Agents Often Inherit Access and Expand the Attack Surface

AI agents introduce access patterns that expand privilege, create indirect pathways, and increase exposure to prompt-driven manipulation.

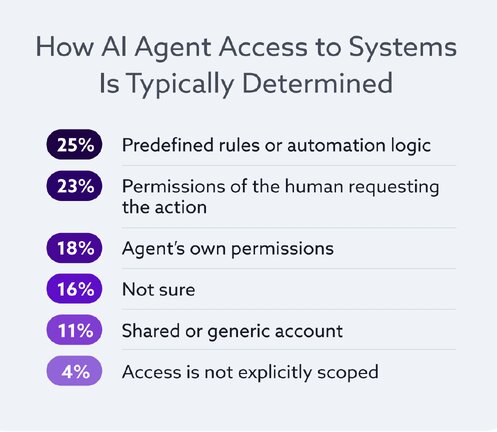

These risk perceptions align closely with how AI agent access is determined in practice. This distribution suggests that access decisions are frequently anchored in human context or pre-existing automation logic rather than in narrowly defined, agent-specific permissions.

This pattern creates an inherent asymmetry in how access is managed. When agents operate under humans or shared identities, associated permissions are applied automatically as a function of that identity. Refinding those permissions, however, often depends on downstream governance processes, policy updates, or manual intervention. Where enforcement mechanisms are uneven or inconsistent, inherited access can persist longer than intended, increasing exposure.

The overall pattern indicates that traditional access control assumptions do not translate cleanly to agentic behavior. Access is frequently inherited, influenced by human context, or governed through shared identities, creating conditions in which excess privilege and limited visibility can coexist. Addressing these dynamics requires enforcement models that account for how agent access is provisioned, inherited, and constrained in practice.

6. Teams Are Using Governance as a Stopgap for Missing AI Agent Identity Controls

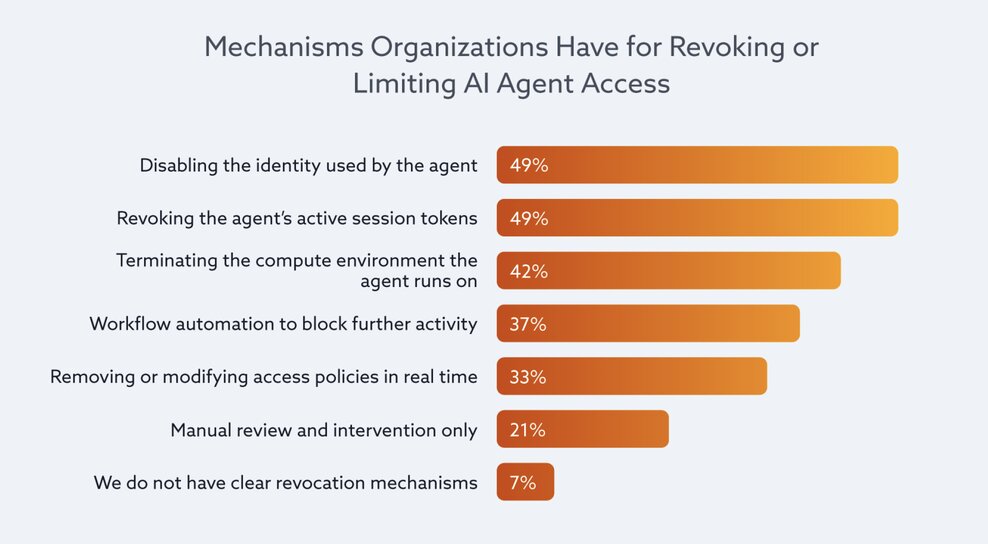

As AI agents assume greater operational responsibility, many organizations are relying on governance mechanisms—such as policy restrictions, human approvals, and post-action monitoring—to manage risk when identity-level IAM controls are not yet consistently embedded for AI agents.

Controls applied to high-impact or sensitive AI agent actions show a similar pattern. Where safeguards rely heavily on approval workflows or retrospective logging, risk management becomes procedural rather than continuously enforced at the identity layer. This reliance on governance and procedural safeguards suggests that dynamic, context-aware enforcement models are not yet uniformly embedded in AI agent deployments.

Accountability structures reinforce this picture. When an AI agent takes an unintended or undesired action, responsibility is most often assigned to security or IT teams (28%) or development or engineering teams (25%). Business or product owners are cited by 18%, 9% report shared responsibility across multiple teams, 6% assign responsibility to the initiating human user, and 15% are unsure. This diffusion of accountability indicates that governance remains distributed and situational. As AI agent autonomy increases, unclear ownership can complicate consistent enforcement and coordinated response.

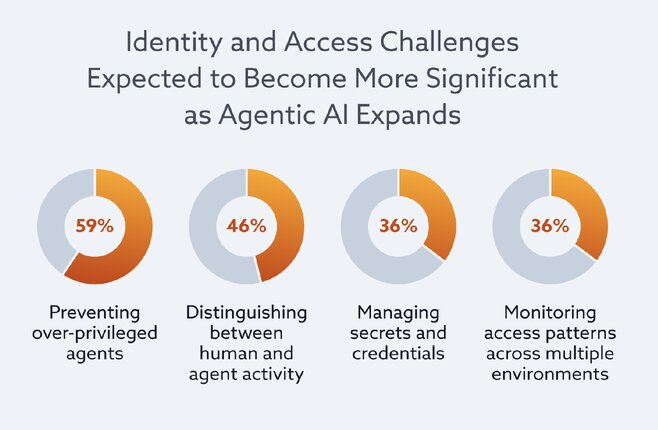

Looking ahead, respondents signal awareness that governance-heavy approaches may not be sufficient as adoption scales. This prioritization suggests that sustainable control is more closely associated with continuous visibility and identity clarity than with emergency shutdown mechanisms.  Future identity and access concerns align with these preferences. These anticipated challenges mirror the structural and operational gaps identified in earlier findings, particularly around over-privilege, attribution, and cross-environment enforcement.

Future identity and access concerns align with these preferences. These anticipated challenges mirror the structural and operational gaps identified in earlier findings, particularly around over-privilege, attribution, and cross-environment enforcement.

The overall pattern suggests that current control strategies emphasize oversight and containment more than embedded, identity-bound, real-time enforcement. Governance mechanisms are playing a central role in managing AI agent risk. Yet respondents’ forward-looking priorities indicate recognition that visibility, clear identity separation, and short-lived access models are necessary to extend IAM principles more systematically to AI agents. The findings collectively point toward a shift from procedural safeguards to more systematic, identity-centric enforcement as AI agent autonomy continues to expand.

Conclusion

AI agents are becoming embedded across enterprise environments, interacting with applications, infrastructure, and data systems in ways that increasingly resemble operational actors rather than experimental tools. As these systems take on greater autonomy and operational responsibility, the identity and access models used to manage them are still evolving.

The findings suggest that many organizations are adapting existing identity frameworks rather than introducing new ones purpose-built for AI agents. As a result, agents are frequently embedded within human or shared identities, inherit permissions not originally designed for their tasks, and operate across environments where attribution and policy enforcement may vary. These structural dynamics influence how access is granted, how behavior is monitored, and how quickly organizations can respond when unexpected actions occur.

Strengthening identity-centric controls for AI agents—alongside visibility and consistent policy enforcement—will be an important step toward ensuring that agent autonomy scales in a controlled and accountable way.

Secure Identity in the Age of AI Agents

AI agents are expanding your attack surface, but without proper identity controls, they become invisible risks.

At Reputiva, we help organizations:

- Treat AI agents as first-class identities

- Enforce least privilege access

- Implement Zero Trust across AI workflows

- Improve visibility and attribution

Turn AI Risk Into Control. Start a Conversation Today