Artificial Intelligence is shaping economies, industries, and societies at an unprecedented pace. The Stanford HAI 2026 Artificial Intelligence (AI) Index Report provides a comprehensive, data-driven view of artificial intelligence. The data reveals a critical reality: AI is scaling faster than the systems designed to govern, evaluate, and secure it.

From rapid enterprise adoption to breakthroughs in reasoning, robotics, and scientific discovery, the report highlights both the immense potential of AI and the growing gaps in governance, safety, and real-world readiness.

This is a technology that has reached mass adoption faster than the personal computer or the internet. Generative AI hit nearly 53% population-level adoption within three years.

Organizational adoption rose to 88%, and early estimates suggest the consumer value of generative AI has grown substantially within a year.

In this article, we break down 15 key takeaways from the report and what they mean for organizations navigating the future of AI.

The AI Index provide unbiased, rigorously vetted, and globally sourced data for policymakers, researchers, journalists, executives, and the general public to develop a deeper understanding of the complex field of AI.

The Stanford HAI (Institute for Human-Centred Artificial Intelligence)

An interdisciplinary initiative dedicated to advancing AI research, education, policy, and practice to improve the human condition.

1. AI capability is not plateauing. It is accelerating and reaching more people than ever.

Industry produced over 90% of notable frontier models in 2025, and several of those models now meet or exceed human baselines on PhD-level science questions, multimodal reasoning, and competition mathematics. On a key coding benchmark—SWE-bench Verified—performance rose from 60% to near 100% of meeting the human baseline in a single year. Organizational adoption reached 88%, and 4 in 5 university students now use generative AI.

2. The U.S.-China AI model performance gap has effectively closed.

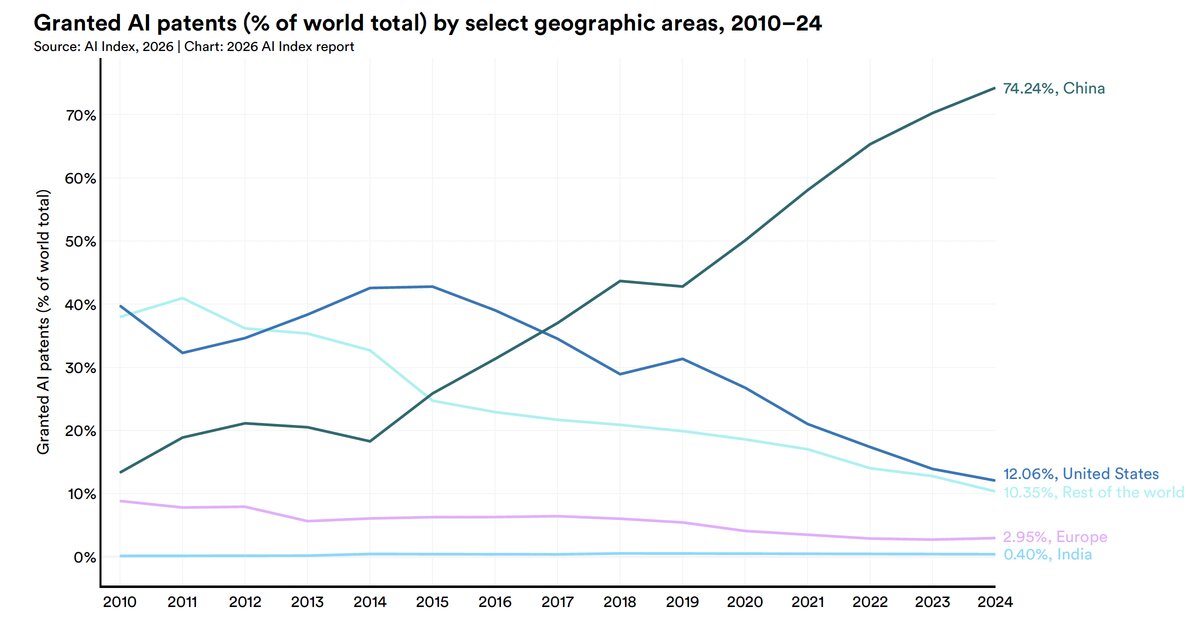

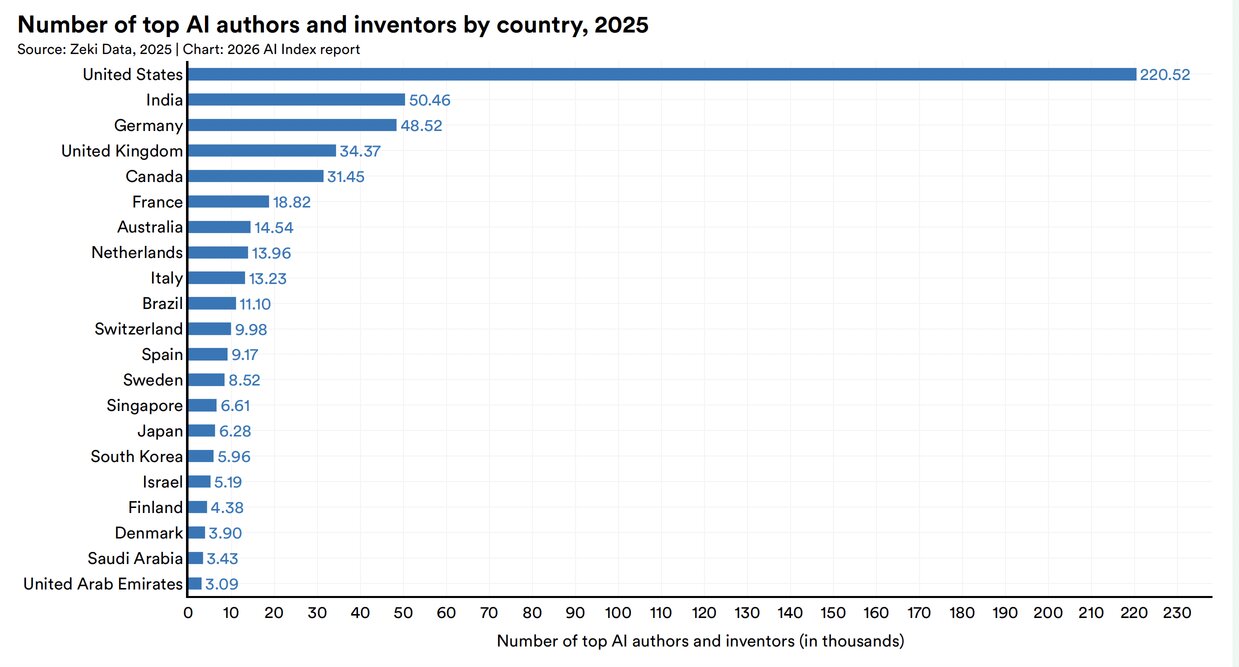

U.S. and Chinese models have traded the lead multiple times since early 2025. In February 2025, DeepSeek-R1 briefly matched the top U.S. model, and as of March 2026 Anthropic’s top model leads by just 2.7%. The U.S. still produces more top-tier AI models and higher-impact patents, while China leads in publication volume, citations, patent output, and industrial robot installations. South Korea stands out for its innovation density, leading the world in AI patents per capita.

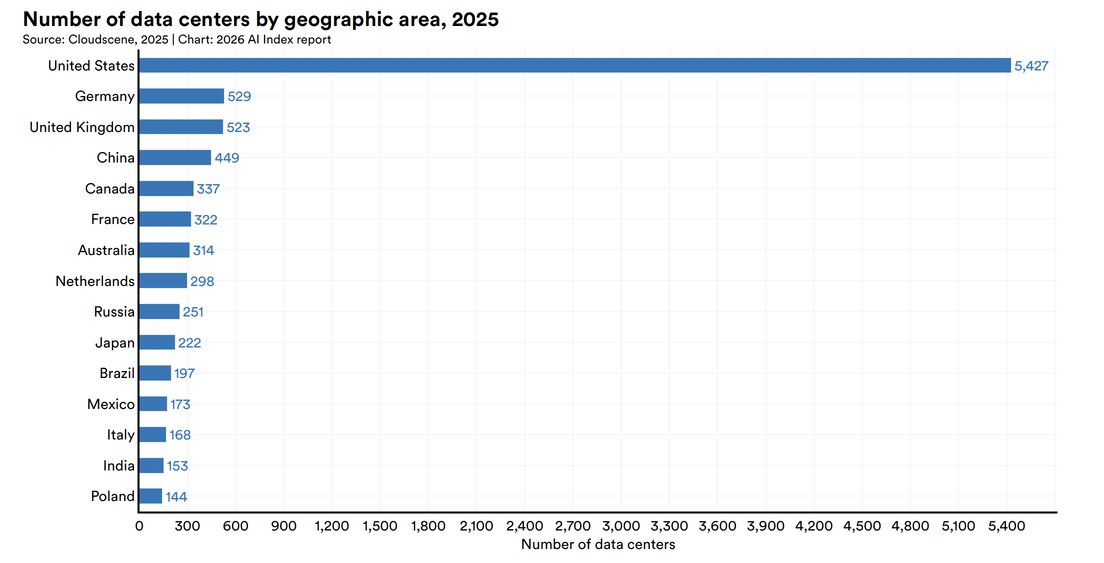

3. The United States hosts the most AI data centers, with the majority of their chips fabricated by one Taiwanese foundry.

The United States hosts 5,427 data centers, more than 10 times any other country, and it consumes more energy than any other country. A single company, TSMC, fabricates almost every leading AI chip, making the global AI hardware supply chain dependent on one foundry in Taiwan—though a TSMC-U.S. expansion began operations in 2025.

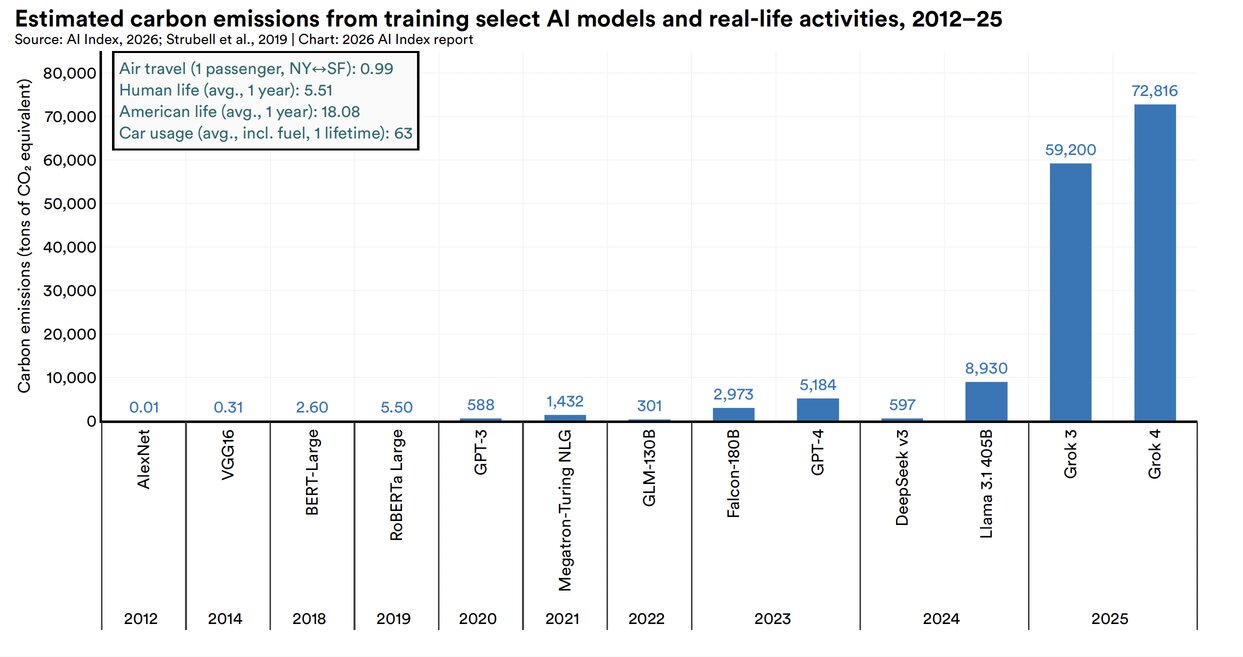

In 2025, Grok 4’s estimated training emissions reached 72,816 tons of CO₂ equivalent. AI data center power capacity rose to 29.6 GW, comparable to New York state at peak demand, and annual GPT-4o inference water use alone may exceed the drinking water needs of 12 million people.

Energy and Environmental Impact

A short GPT-4o query consumes approximately 0.42 Wh, which is 40% more than a Google search at 0.3 Wh. A daily session of eight medium-length queries uses the energy comparable to charging two smartphones (9.7 Wh). But across hundreds of millions of daily queries, the consumption scales into something much larger.

Annual estimates for GPT-4o inference range from about 1.3 to 1.6 kiloliters, which, at the high end, exceeds the annual drinking water needs of 12 million people.

AI Data Center

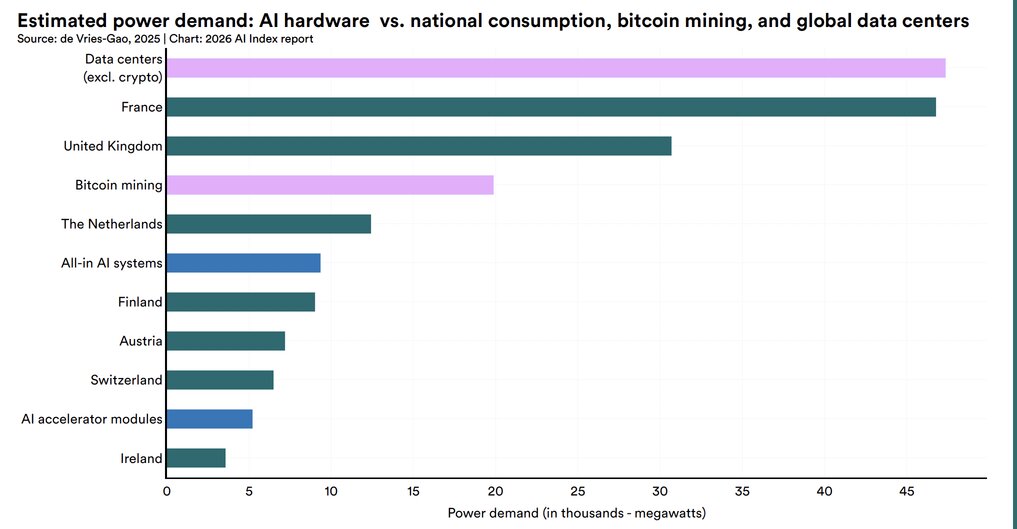

When including the full systems supporting those accelerators (servers, cooling, networking), estimated demand reached approximately 9,400 MW. To put that scale in perspective, the cumulative power demand of all-in AI systems is comparable to the national electricity consumption of Switzerland or Austria, and roughly half that of Bitcoin mining.

Excluding crypto, global data centers accounted for the highest estimated power demand at around 47,000 MW, with AI hardware making up a growing share of that total.

AI-Granted Patents

AI Authors and Inventors

4. AI models can win a gold medal at the International Mathematical Olympiad but cannot reliably tell time—an example of what researchers call the jagged frontier of AI.

Gemini Deep Think earned a gold medal at IMO, yet the top model reads analog clocks correctly just 50.1% of the time. AI agents made a leap from 12% to ~66% task success on OSWorld, which tests agents on real computer tasks across operating systems, though they still fail roughly 1 in 3 attempts on structured benchmarks.

5. Robots still fail at most household tasks, even as they excel in controlled environments.

Robots succeed in only 12% of household tasks, highlighting how far AI is from mastering the physical world. On RLBench, robotic manipulation in software-based simulations has reached 89.4% success, but the gap between predictable lab settings and unpredictable household environments is wide.

6. Responsible AI is not keeping pace with AI capability, with safety benchmarks lagging and incidents rising sharply.

Almost all leading frontier AI model developers report results on capability benchmarks, but reporting on responsible AI benchmarks remains spotty. Documented AI incidents rose to 362, up from 233 in 2024. Adding to the challenge, recent research found that improving one responsible AI dimension, such as safety, can degrade another, such as accuracy.

7. The United States leads in AI investment, but its ability to attract global talent is declining.

U.S. private AI investment reached $285.9 billion in 2025, more than 23 times the $12.4 billion invested in China—though looking at just private investment figures likely understates China’s total AI spending, given its government guidance funds. The U.S. also led in entrepreneurial activity with 1,953 newly funded AI companies in 2025, more than 10 times the next closest country. However, the number of AI researchers and developers moving to the U.S.

8. AI adoption is spreading at historic speed, and consumers are deriving substantial value from tools they often access for free.

Generative AI reached 53% population adoption within three years, faster than the PC or the internet, though the pace varies by country and correlates strongly with GDP per capita. Some show higher-than-expected adoption, such as Singapore (61%) and the United Arab Emirates (54%), while the U.S. ranks 24th at 28.3%. The estimated value of generative AI tools to U.S. consumers reached $172 billion annually by early 2026, with the median value per user tripling between 2025 and 2026.

9. Productivity gains from AI are appearing in many of the same fields where entry-level employment is starting to decline.

Studies show productivity gains of 14% to 26% in customer support and software development, with weaker or negative effects in tasks requiring more judgment. AI agent deployment remains in single digits across nearly all business functions. In software development, where AI’s measured productivity gains are clearest, U.S. developers ages 22 to 25 saw employment fall nearly 20% from 2024, even as the headcount for older developers continues to grow.

10. AI’s environmental footprint is expanding alongside its capabilities.

Grok 4’s estimated training emissions reached 72,816 tons of CO2 equivalent. AI data center power capacity rose to 29.6 GW, comparable to New York state at peak demand, and annual GPT-4o inference water use alone may exceed the drinking water needs of 12 million people.

11. AI models for science can outperform human scientists, though bigger models do not always perform better.

Frontier models outperform human chemists on average on ChemBench, yet they score below 20% on replication in astrophysics and 33% on Earth observation questions. A 111-million-parameter protein language model, MSAPairformer, beat previous leading methods on ProteinGym, and a 200-million-parameter genomics model, GPN-Star, outperformed a model nearly 200 times larger. Most AI foundation models for science come from cross-sector collaborations, in contrast with the industry-dominated landscape of general-purpose AI.

12. AI is transforming clinical care, but rigorous evidence remains limited.

AI tools that automatically generate clinical notes from patient visits saw substantial adoption in 2025. Across multiple hospital systems, physicians reported up to 83% less time spent writing notes and significant reductions in burnout. Beyond certain tools, however, the evidence base for clinical AI remains thin. A review of more than 500 clinical AI studies found that nearly half relied on exam-style questions rather than real patient data, with only 5% using real clinical data.

13. Formal education is lagging behind AI, but people are learning AI skills at every stage of life.

Over 80% of U.S. high school and college students now use AI for school-related tasks, but only half of middle and high schools have AI policies in place, and just 6% of teachers say those policies are clear. Outside the classroom, AI engineering skills are accelerating fastest in the United Arab Emirates, Chile, and South Africa. The number of new AI PhDs in the U.S. and Canada increased 22% from 2022 to 2024, the PhDs that make up that increase took jobs in academia, not in industry.

14. AI sovereignty is becoming a defining feature of national policy, but capabilities remain uneven, even as open-source development helps to redistribute who participates.

National AI strategies are expanding, particularly among developing economies, and state-backed investments in AI supercomputing are rising in parallel—a sign of growing ambitions for domestic control over AI ecosystems. Yet model production remains concentrated in the U.S. and China. Open-source development is starting to redistribute participation, with contributions from the rest of the world now outpacing Europe and approaching the United States on GitHub, fueling more linguistically diverse models and benchmarks.

15. AI experts and the public have very different perspectives on the technology’s future, and global trust in institutions to manage AI is fragmented.

When it comes to how people do their jobs, 73% of experts expect a positive impact, compared with just 23% of the public, a 50-point gap. Similar divides appear for AI’s impact on the economy and medical care. Globally, trust in governments to regulate AI varies. Among surveyed countries, the United States reported the lowest level of trust in its own government to regulate AI, at 31%. Globally, the EU is trusted more than the United States or China to regulate AI effectively.

AI is scaling faster than most organizations can manage securely.

At Reputiva, we help organizations design and secure cloud and AI environments across AWS, Azure, and GCP, ensuring visibility, governance, and risk control as AI adoption accelerates.

Start with a Cloud & AI Security Assessment to identify risks, strengthen your security posture, and build a scalable foundation for AI-driven innovation.