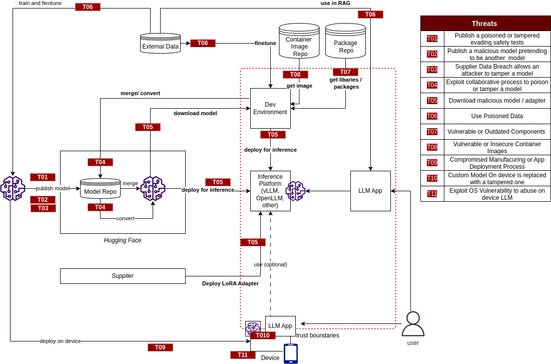

Modern AI systems are no longer built from a single model or application. Today’s Large Language Model (LLM) ecosystems depend on a growing network of third-party models, datasets, APIs, plugins, open-source frameworks, vector databases, LoRA adapters, cloud infrastructure, and AI marketplaces. That interconnected ecosystem creates a new and rapidly expanding attack surface.

In the OWASP Top 10 for LLM Applications 2025, LLM03:2025 Supply Chain highlights the growing security risks associated with the AI supply chain, from compromised open-source models and poisoned datasets to malicious LoRA adapters, vulnerable dependencies, and manipulated model repositories.

As organizations accelerate the adoption of AI, agentic systems, Retrieval-Augmented Generation (RAG), and open-source LLMs, supply chain security is quickly becoming one of the most important areas of AI governance and cybersecurity.

What is Supply Chain Risk?

LLM supply chains are susceptible to various vulnerabilities that can compromise the integrity of training data, models, and deployment platforms. These risks can result in biased outputs, security breaches, or system failures. While traditional software vulnerabilities focus on issues such as code flaws and dependencies, in ML, risks also extend to third-party pre-trained models and data.

Creating LLMs is a specialized task that often depends on third-party models. The rise of open-access LLMs and new fine-tuning methods like “LoRA” (Low-Rank Adaptation) and “PEFT” (Parameter-Efficient Fine-Tuning), especially on platforms like Hugging Face, introduces new supply-chain risks. The emergence of on-device LLMs increases the attack surface and supply-chain risks for LLM applications.

Common Examples of Supply Chain Risk

1. Traditional Third-party Package Vulnerabilities

Attackers exploit outdated or deprecated components to compromise LLM applications.

2. Licensing Risks

AI development often involves diverse software and dataset licenses, which can pose risks if not properly managed. Different open-source and proprietary licenses impose varying legal requirements. Dataset licenses may restrict usage, distribution, or commercialization.

3. Outdated or Deprecated Models

Using outdated or deprecated models that are no longer maintained leads to security issues.

4. Vulnerable Pre-Trained Model

Models are binary black boxes and unlike open source, static inspection can offer little to security assurances. Vulnerable pre-trained models can contain hidden biases, backdoors, or other malicious features that have not been identified through the safety evaluations of the model repository.

5. Weak Model Provenance

Model Cards and associated documentation provide model information and relied upon users, but they offer no guarantees on the origin of the model. An attacker can compromise supplier account on a model repo or create a similar one and combine it with social engineering techniques to compromise the supply-chain of an LLM application.

Currently there are no strong provenance assurances in published models.

6. Vulnerable LoRA adapters

LoRA is a popular fine-tuning technique that enhances modularity by allowing pre-trained layers to be bolted onto an existing LLM. The method increases efficiency but create new risks, where a malicious LorA adapter compromises the integrity and security of the pretrained base model.

7. Exploit Collaborative Development Processes

Collaborative model merge and model handling services (e.g. conversions) hosted in shared environments can be exploited to introduce vulnerabilities in shared models. Model merging is very popular on Hugging Face with model-merged models topping the OpenLLM leaderboard and can be exploited to bypass reviews.

8. LLM Model on Device supply-chain vulnerabilities

LLM models on device increase the supply attack surface with compromised manufactured processes and exploitation of device OS or fimware vulnerabilities to compromise models. Attackers can reverse engineer and re-package applications with tampered models.

9. Unclear T&Cs and Data Privacy Policies

Unclear T&Cs and data privacy policies of the model operators lead to the application’s sensitive data being used for model training and subsequent sensitive information exposure. This may also apply to risks from using copyrighted material by the model supplier.

Real-World Examples of LLM03:2025 Supply Chain Exploits

1. Malicious AI Models uploaded to Hugging Face

Hugging Face (GitHub for Machine Learning) is an open-source AI platform that is primarily used to discover, share, and deploy pre-trained AI models for NLP, computer vision, and audio tasks. Attackers uploaded malicious repositories and AI models to Hugging Face, disguised as legitimate AI tools and OpenAI-related projects. Some contained infostealer malware and malicious pickle files capable of arbitrary code execution.

2. Malware distributed through AI ecosystem

Cybercriminals used Hugging Face infrastructure to distribute Android malware disguised as security applications.

3. Pickle-Based AI Model exploits

Security researchers continue to warn about insecure pickle serialization in machine learning models. Malicious pickle files can: execute arbitrary code, bypass scanners, and compromise environments during model loading.

Prevention and Mitigation Strategies

- Carefully vet data sources and suppliers, including T&Cs and their privacy policies, only using trusted suppliers. Regularly review and audit supplier Security and Access, ensuring no changes in their security posture or T&Cs.

- Apply comprehensive AI Red Teaming and Evaluations when selecting a third-party model. Use extensive AI Red Teaming to evaluate the model, especially in the use cases you plan to use it for.

- Maintain an up-to-date inventory of components using a Software Bill of Materials (SBOM) to ensure you have an up-to-date, accurate, and signed inventory, preventing tampering with deployed packages. SBOMs can be used to detect and alert for new, zero-date vulnerabilities quickly.

OWASP CycloneDX is a full-stack Bill of Materials (BOM) standard that provides advanced supply chain capabilities for cyber risk reduction.

- Only use models from verifiable sources and use third-party model integrity checks with signing and file hashes to compensate for the lack of strong model provenance. Similarly, use code signing for externally supplied code.

- Implement strict monitoring and auditing practices for collaborative model development environments to prevent and quickly detect any abuse.

- Implement a patching policy to mitigate vulnerable or outdated components. Ensure the application relies on a maintained version of APIs and underlying model.

- Encrypt models deployed at AI edge with integrity checks and use vendor attestation APIs to prevent tampered apps and models and terminate applications of unrecognized firmware.

AI Security is no longer just about the model, it’s about the entire ecosystem

Many organizations are rushing into AI adoption without fully understanding how dependent modern AI systems are on external components and third-party ecosystems. A single AI application may rely on: open-source models from Hugging Face, external APIs, cloud AI platforms, vector databases, fine-tuned LoRA adapters, third-party plugins, agent frameworks, and datasets sourced from multiple providers.

Every dependency introduces trust, integrity, privacy, compliance, and operational risks.

OWASP’s LLM03:2025 highlights a critical reality: organizations must start treating AI systems like modern software supply chains — with governance, inventory management, provenance validation, access control, continuous monitoring, and security reviews built into the AI lifecycle.

The challenge is no longer simply “Which model should we use?”

The real question is:

“Can we trust every component connected to our AI environment?”

For organizations adopting AI across AWS, Azure, and GCP, supply chain security must become a core part of AI governance, cloud security, identity management, and enterprise risk management strategies.

AI adoption without supply chain security creates hidden risks that many organizations are only beginning to understand. From vulnerable open-source models and poisoned datasets to insecure plugins, APIs, and cloud AI infrastructure, modern AI systems require a security-first approach from design to deployment.

Reputiva helps organizations strengthen AI, cloud, and cybersecurity resilience through:

- AI security assessments,

- cloud security architecture,

- Microsoft 365 and Google Workspace security,

- AI governance and risk management,

- identity and access management,

- and security awareness training across AWS, Azure, and GCP environments.

As AI ecosystems become more connected, securing the AI supply chain will become just as important as securing the application itself.

Book a Cloud & AI Security Consultation with Reputiva today to assess your AI readiness, strengthen your security posture, and build safer AI systems from the ground up.