Artificial intelligence is evolving from passive tools into autonomous systems capable of making decisions and executing actions. The HiddenLayer 2026 AI Threat Landscape Report highlights a critical shift: the rise of agentic AI, systems that can plan, interact with external tools, and operate with minimal human oversight. While these capabilities unlock significant business value, they also introduce entirely new security risks.

As organizations embed AI deeper into operations, the attack surface expands, creating new opportunities for manipulation, misuse, and compromise. In this article, we explore the key findings from the report and what they mean for organizations looking to securely adopt AI at scale.

Innovation without protection invites disruption. Autonomy without oversight invites abuse.

About the Report

- Release Date: March 18, 2026.

- Accompanying Webinar: April 8, 2026.

The 2026 HiddenLayer AI Threat Landscape Report provides a comprehensive analysis of emerging risks across AI systems, with a strong focus on agentic AI, real-world attack patterns, and evolving security challenges. The report draws on industry research, threat intelligence, and practical insights from securing enterprise AI environments to offer a data-driven view of how AI is reshaping the cybersecurity landscape.

We are entering the next phase of the AI revolution. What began as predictive models and generative interfaces is rapidly evolving into autonomous, agentic systems capable of planning, reasoning, and acting on our behalf. In 2026, no mission, enterprise, or government agency will remain untouched by AI agents operating across workflows, networks, and critical infrastructure.

The Rise of AI Agents

Agentic AI represents a profound leap forward. These systems are no longer limited to responding to prompts. They can set goals, call tools, interact with other systems, generate code, initiate transactions, and adapt dynamically to changing environments. Properly harnessed, they promise unprecedented operational efficiency, accelerated innovation, and entirely new models of productivity. But autonomy changes the risk equation.

The conversation around autonomous AI agents gained momentum in 2024, but it wasn’t until 2025 that things truly began to take shape. The shift from experimental demonstrations to production-grade systems occurred rapidly, as major vendors expanded AI capabilities beyond question answering into autonomous task execution.

When AI systems are empowered to take action, the attack surface expands dramatically. The same capabilities that enable agents to automate business processes can be manipulated to automate exploitation. The same reasoning loops that drive efficiency can be redirected toward malicious objectives. As AI gains agency, adversaries gain leverage.

Make no mistake, the defining AI security challenge of this era is not hypothetical superintelligence. It is the weaponization, manipulation, and compromise of autonomous systems by bad actors.

Emerging Threat Landscape

Agentic architectures introduce new layers of vulnerability, including tool poisoning, memory manipulation, model context hijacking, multi-agent collusion, identity abuse, data exfiltration via action chains, and the exploitation of decision-making loops. These risks are not theoretical. They are emerging now across commercial enterprises and federal environments, experimenting with AI-driven automation.

Securing AI Agents

Traditional cybersecurity principles remain essential, but they are no longer sufficient on their own. Securing agentic AI demands continuous validation of model behavior, real-time inspection of agent actions, guardrails around tool access, and controls that account for systems capable of independent execution. The convergence of AI security and application security has never been more urgent.

As organizations race toward autonomy, security must move just as quickly. Innovation without protection invites disruption. Autonomy without oversight invites abuse.

Conclusion

AI systems should be assumed exploitable, not merely vulnerable. Securing AI in an agentic era requires a shift away from one-time controls and policy assertions toward continuous accountability, runtime monitoring, enforceable governance, third-party assurance, and security mechanisms designed for systems that evolve and act beyond human-in the-loop oversight.

What This Means for Your Organization

The shift to agentic AI is not just a technological evolution but a fundamental change in your risk landscape.

1. Your Attack Surface Has Expanded Beyond Traditional Systems

AI systems are no longer isolated tools. They:

- Interact with APIs

- Execute workflows

- Access sensitive data

- Make decisions autonomously

The report highlights risks such as tool poisoning, memory manipulation, and context hijacking, which specifically target how AI systems operate.

What this means:

Your AI environment is now part of your core attack surface, not just an application layer.

2. Security Must Move From Static to Continuous

Traditional controls such as periodic assessments, static policies, and perimeter defences are no longer sufficient.

AI systems:

- Evolve over time

- Act independently

- Interact dynamically with other systems

What this means:

You need:

- Continuous monitoring

- Runtime validation of AI behavior

- Real-time enforcement of controls

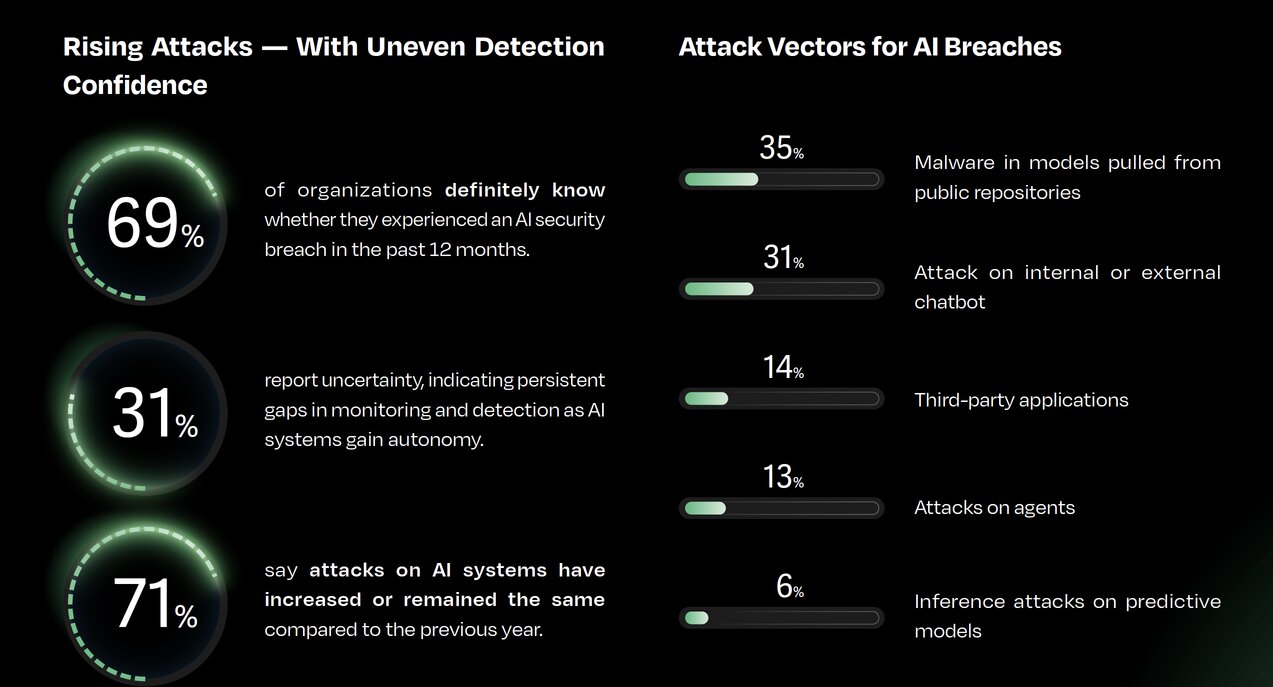

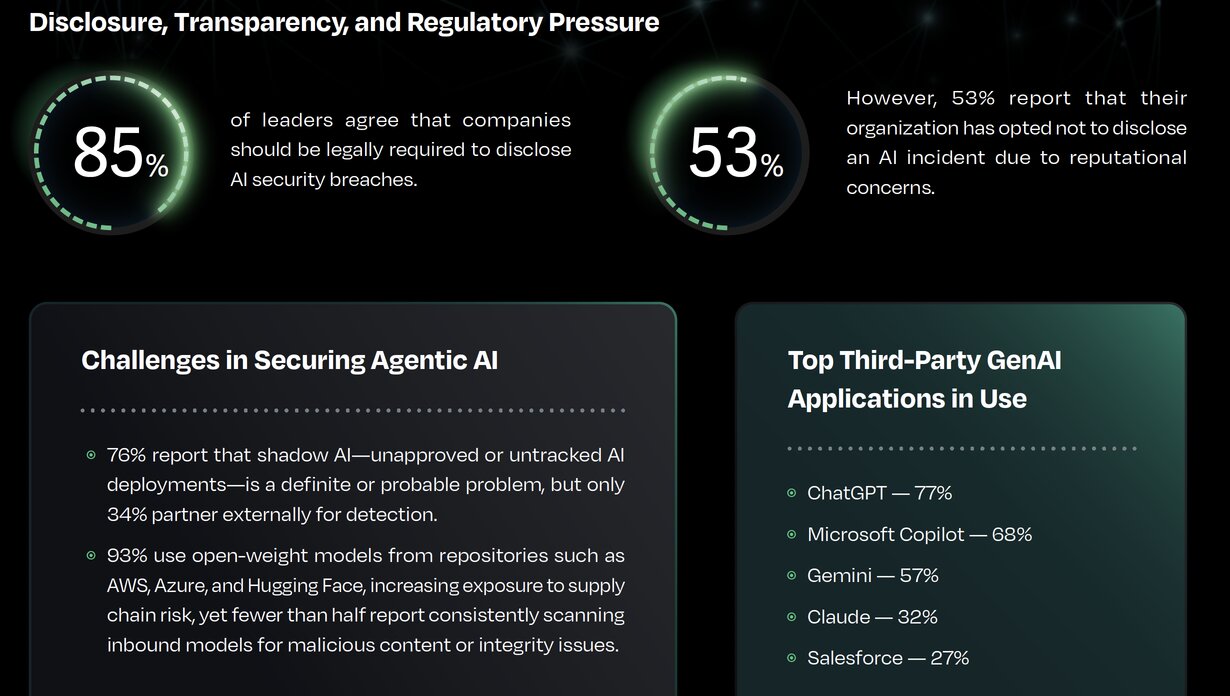

3. You Likely Have Visibility Gaps Today

The report reveals:

- Many organizations cannot confidently detect AI-related breaches

- Shadow AI (unapproved deployments) is widespread

- Detection and accountability remain fragmented

What this means:

There is a high likelihood that:

- AI is being used without governance

- Risks are not fully visible

- Incidents could go undetected

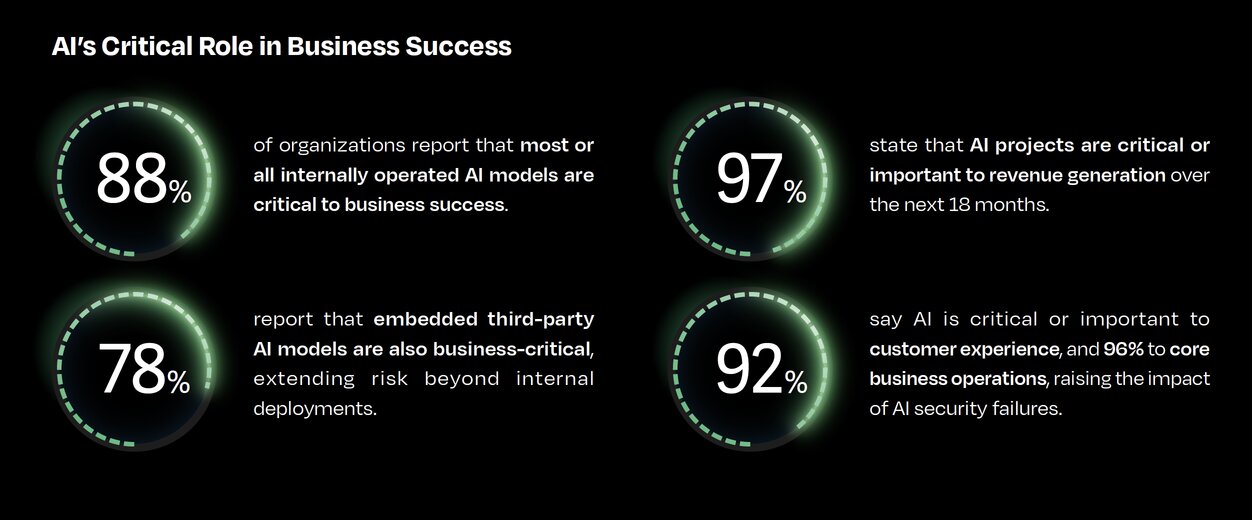

4. AI Is Now Business-Critical, So Is the Risk

AI is increasingly:

- Critical to revenue

- Embedded in operations

- Central to customer experience

Failures in AI systems can lead to:

- Operational disruption

- Data exposure

- Reputational damage

What this means:

AI security is no longer just an IT concern; it is a business risk and board-level priority.

5. Attackers Are Scaling Faster Using AI

The report shows how AI is enabling:

- Automated cybercrime

- Deepfake identity fraud

- AI-assisted malware and phishing

- Autonomous attack campaigns

What this means:

Attackers now: Require less skill, move faster and operate at scale. Your defenses must evolve accordingly.

6. Third-Party and Supply Chain Risk Is Increasing

Organizations are heavily relying on:

- Open models

- Third-party AI tools

- External APIs

But these introduce:

- Supply chain vulnerabilities

- Model integrity risks

- Hidden dependencies

What this means:

You must:

- Validate third-party AI components

- Monitor model integrity

- Extend security beyond your internal environment

AI is transforming how organizations operate, but without the right controls, it is also expanding your attack surface.

Organizations that treat AI as just another application will fall behind. Those who treat it as a new security domain will be better positioned to innovate safely.

At Reputiva, we help organizations secure AI and cloud environments across AWS, Azure, and GCP, with a focus on visibility, identity security, and risk management.

Start with a Cloud & AI Security Assessment to identify risks, strengthen your posture, and build a secure foundation for AI adoption.